Navigate in Console

On this page you will learn about how to navigate through the Certifai Console pages.

Prerequisites

You have installed the Toolkit locally and/or have a platform implementation, and you have opened a local or remote Console window.

General navigation

NOTE: By way of example to illustrate navigation through the Console, this section will refer to the Banking: Loan Approval example reports provided with the Toolkit.

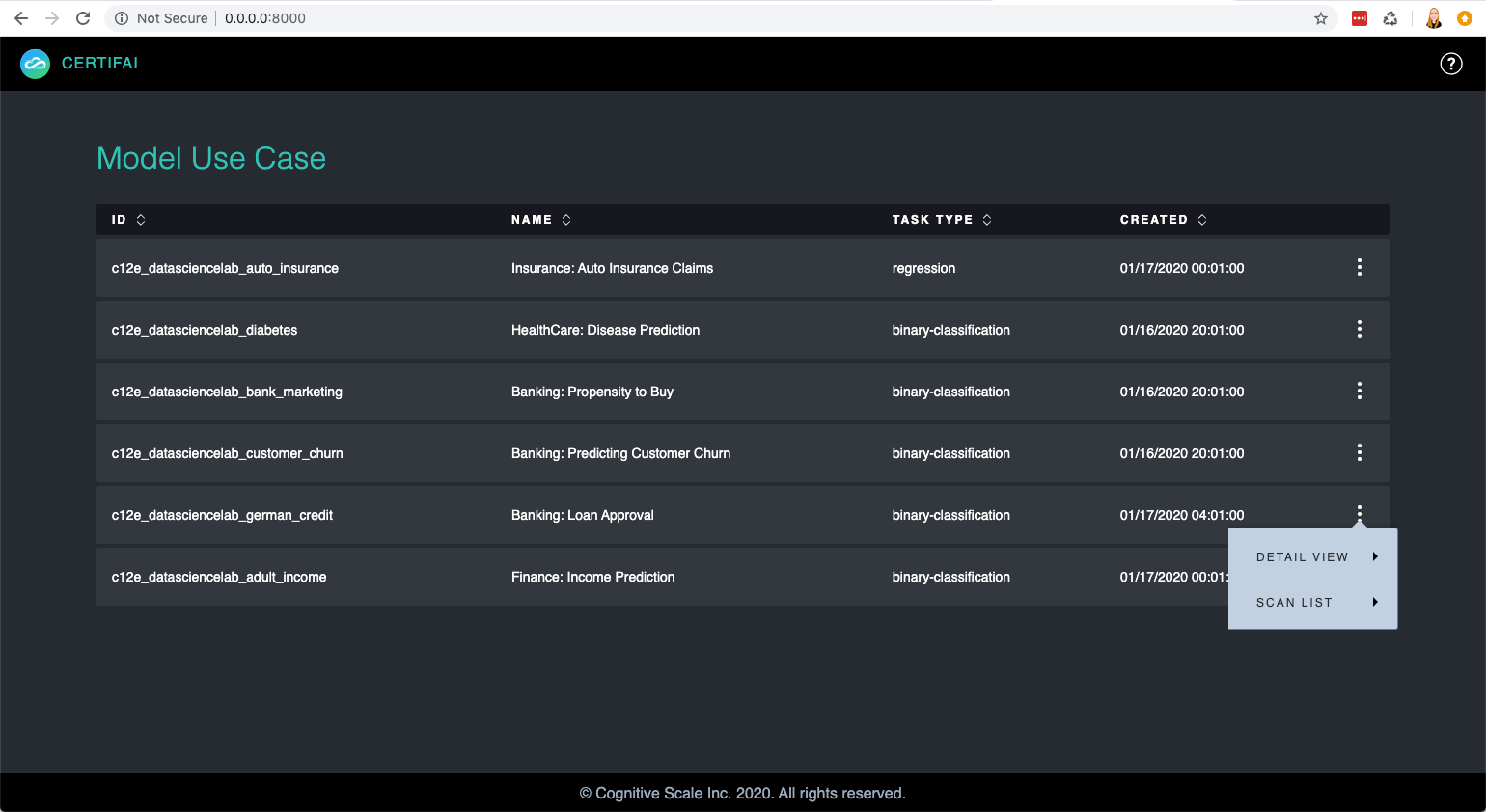

When you open the Console window in your browser (Chrome is recommended) you will land on the Use Case list page.

Click the menu icon at the far right in the row of the

Banking: Loan Approvalexample report. You have 2 options:Click

Scan Listto display a list of scans that have been run for this use case.

Click

Detail Viewto display a description and details for the selected use case.

On the Use Case Detail page in the top left navigation list, you have two options:

- Click the

Scan Listlink to view a list of scans for the use case (see image in step 2). - Click the

Model Use Caseslink to return to the Use Case list (see image in step 1).

- Click the

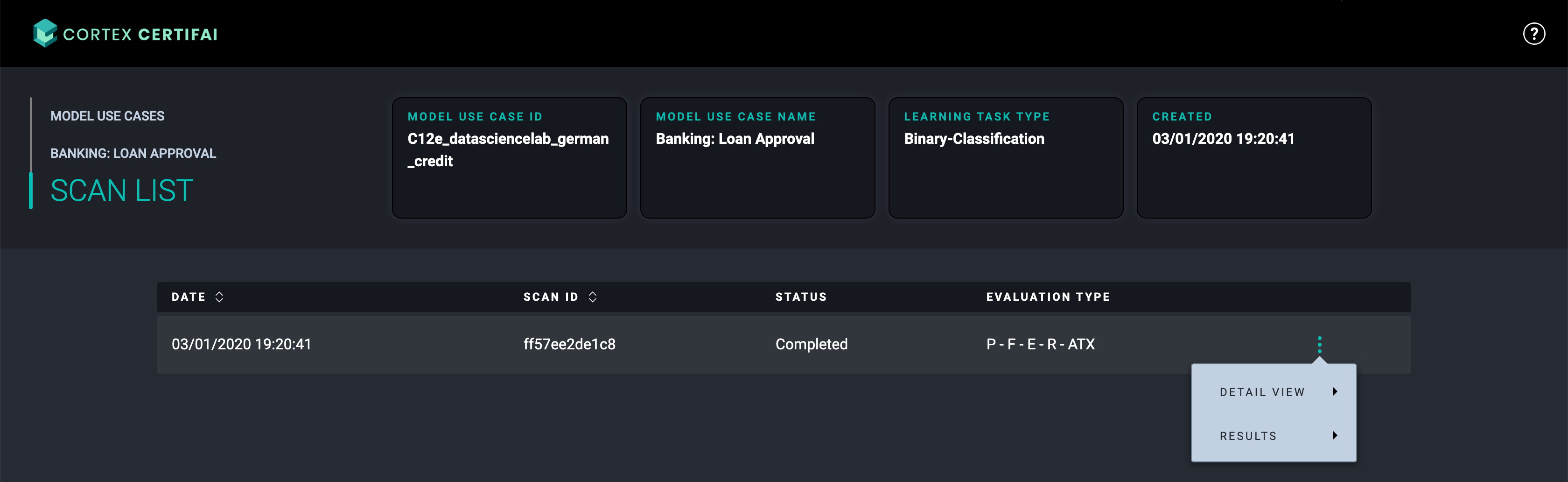

From the Scan List page in a scan item row at the far right, click the menu icon to select one of two options:

Click

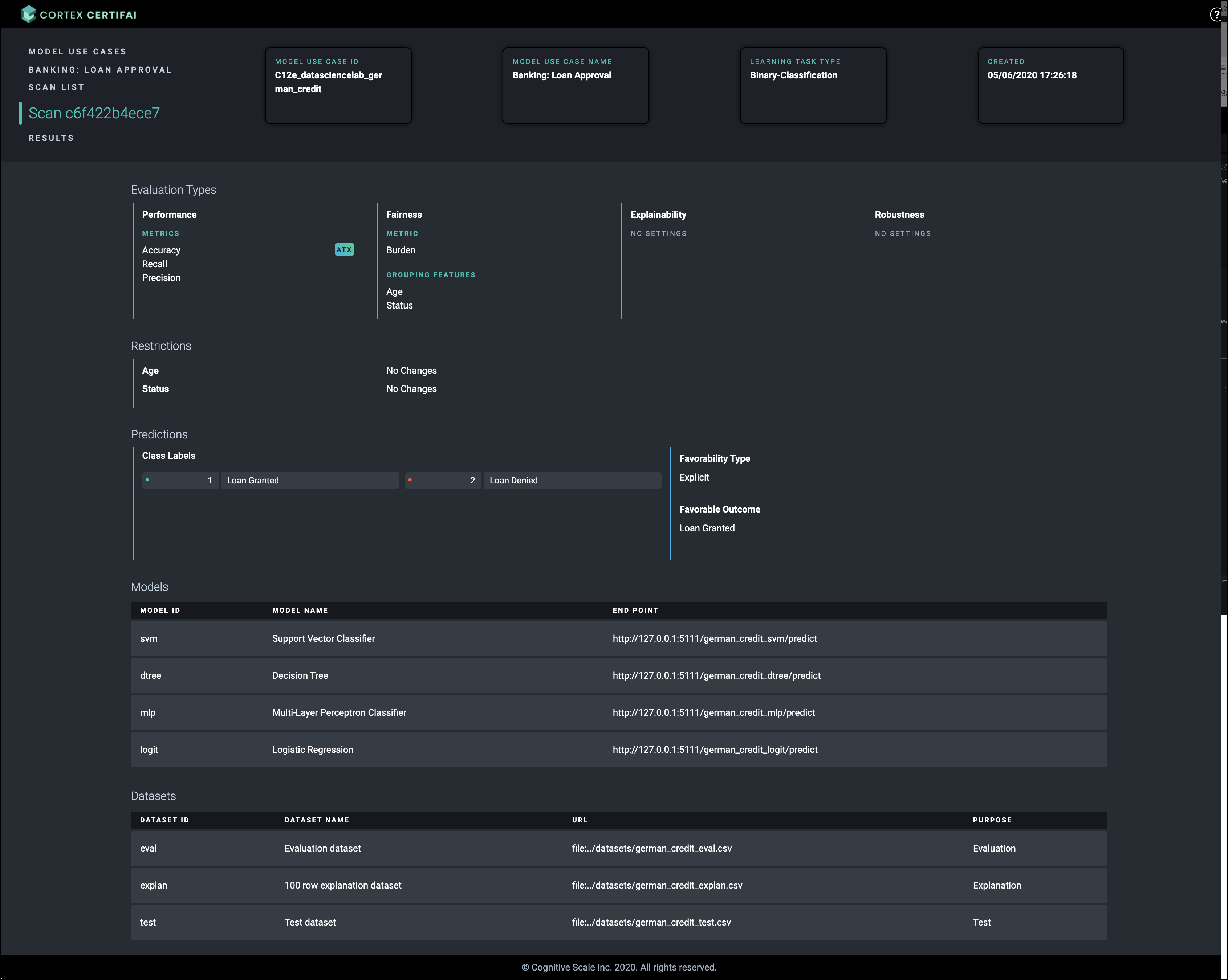

Detail Viewto view the Scan Details page. Model Use Case information is shown across the top. Scan specific details like evaluation types, restrictions, predictions, models, and datasets are displayed below. At the top left is the navigation link list.

Click

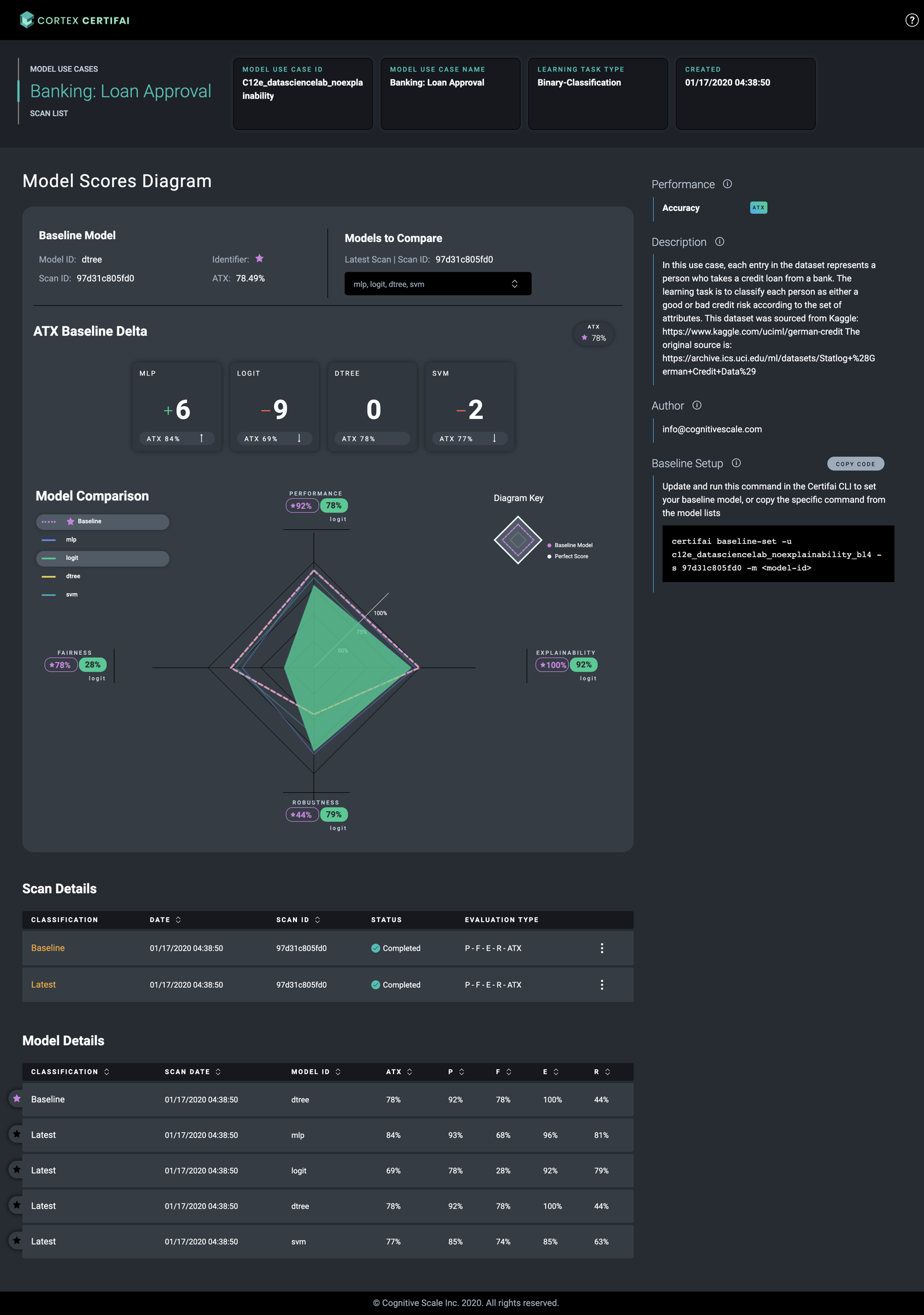

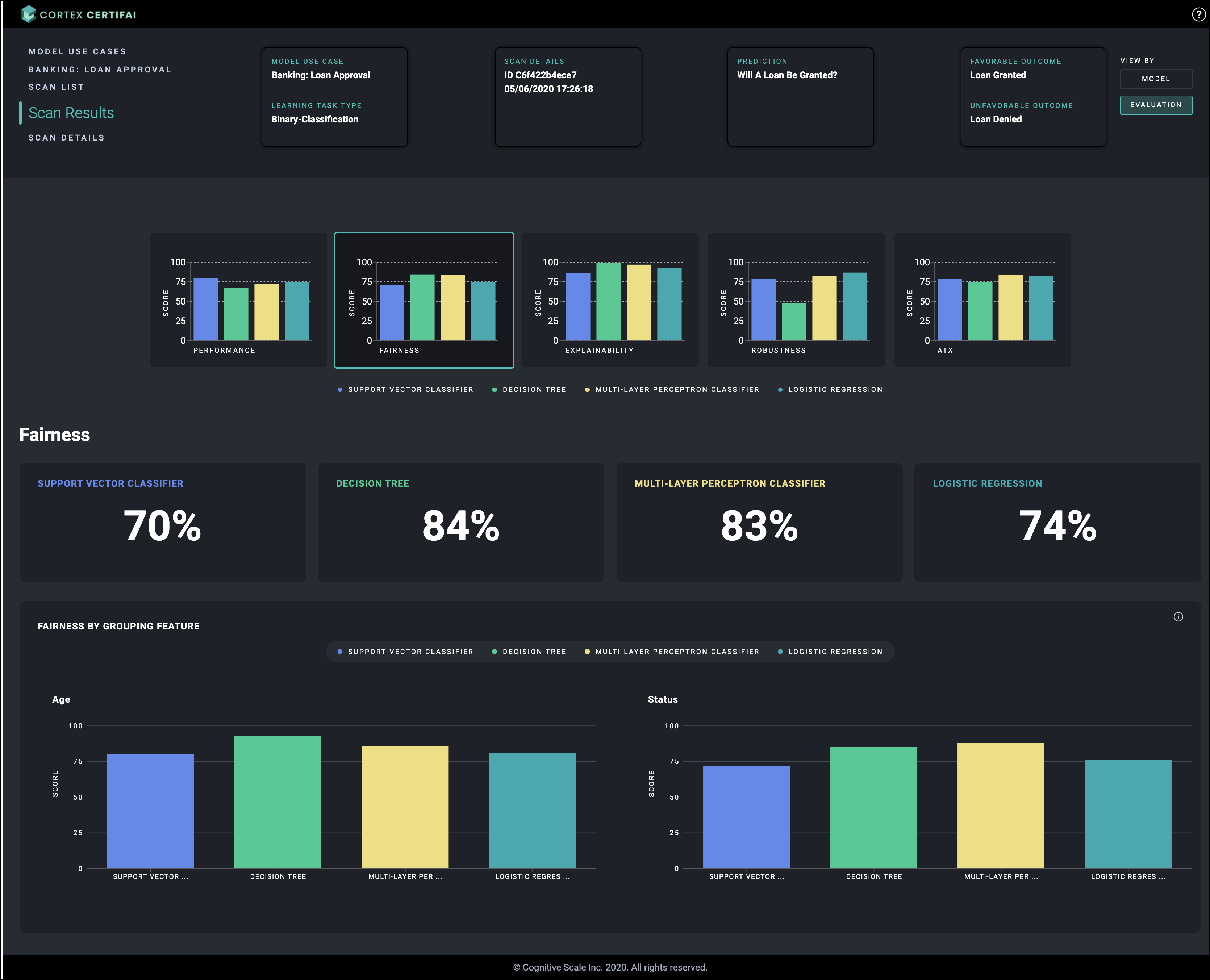

Resultsto go to the visualization for the scan. At the top right of the page, you can toggle between the Model and Evaluation views. View by Model is the default. At the top of the page from left to right you will find:- A link list with navigation options

- Use case-scan information that applies to all evaluation types

- The report type toggle that defaults to

Model, but can be switched toEvaluationview.

From the Scan Detail page top left navigation list, you can click:

- The

Model Use Caseslink to return to the Use Case list page (see image in step 1) - The

Use Case Namelink (Banking: Loan Approvalin this example) to view the Use Case Details page. (see image in step 2) - The

Scan Listlink to return to the list of scans that have been run for the use case. (see image in step 2) - The

Resultslink to view the visualizations for the scan. (see image in step 4)

- The

The Results link's landing page is an Evaluation Scan report (see the different Evaluations below). From this report you can navigate to:

- Other pages in the Console (top left link list)

- The

Model Use Caseslink returns you to the Use Case list page (see image in step 2) - The

Use Case Namelink (Banking: Loan Approvalin this example) takes you to view the Model Scores Diagram, Use Case details, Scan information, and Model Scores table. (see inage in step 5) - The

Scan Listlink returns you to the list of scans that have been run for the use case. (see image in step 2) - The

Scan IDlink returns you to the Scan Details page. (see image in step 4)

- The

- The Model view of the Evaluation (Evaluation/Model toggle at the top right) (see image in step 7)

- The Evaluation visualization for the scan. Click on the graph of the evaluation type to display the details for that evaluation type for all models included in the scan. (see "Evaluation reports" below)

- Other pages in the Console (top left link list)

On the

Modeltoggle landing page a graph is displayed for each model included in the scan.Click on a model graph to view evaluation type details for the selected model.

Evaluation reports

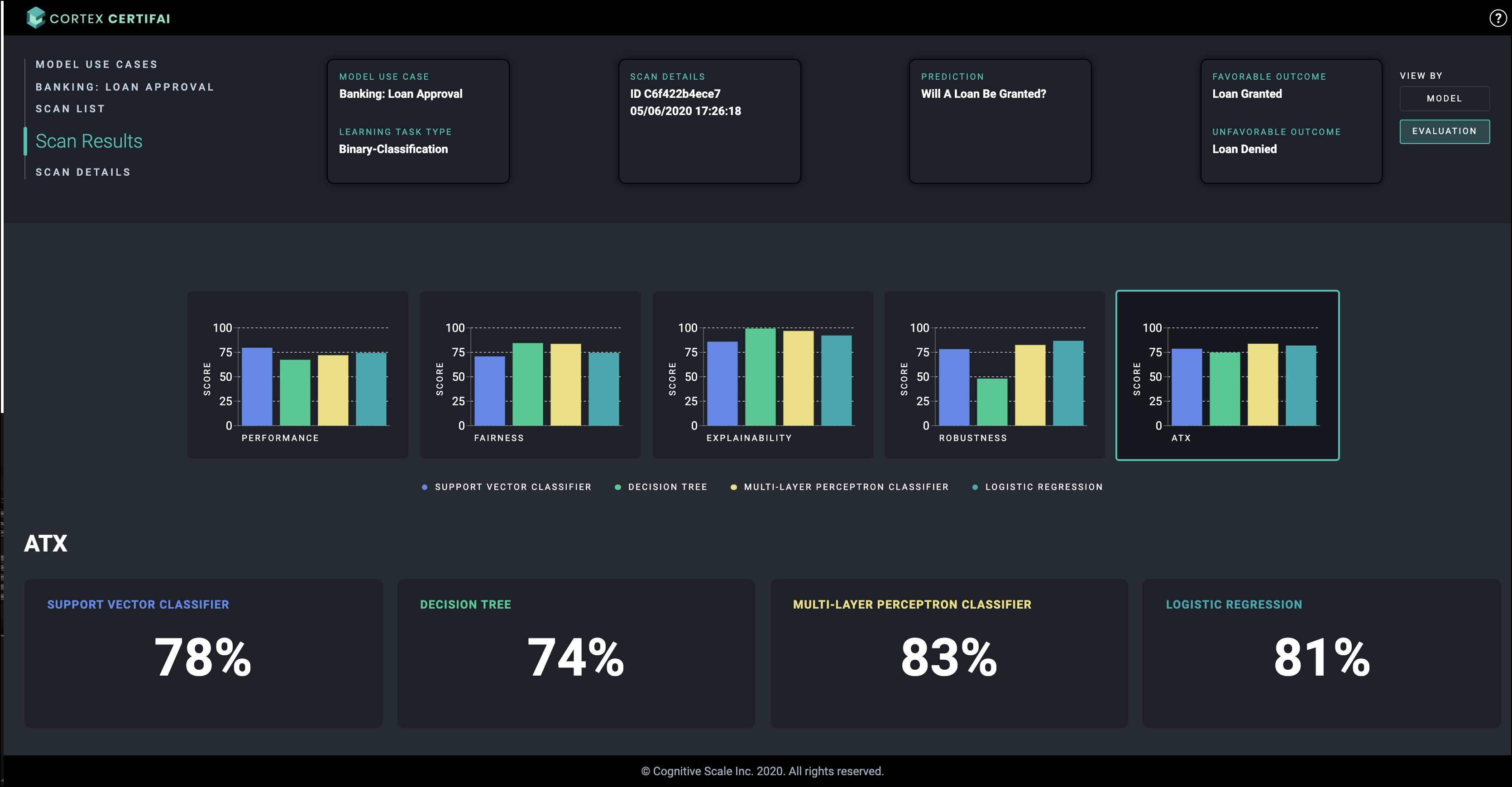

There are 5 possible evaluation types and corresponding reports that can be configured for scans. Four of these types provides a different kind of trust scores for the models. The fifth score provided in the visualizations, ATX, is a composite trust index score calculated based on the other evaluation type scores. The 5th evaluation type provides detailed explanations for individual model predictions, and does not provide a trust score.

Performance

Fairness

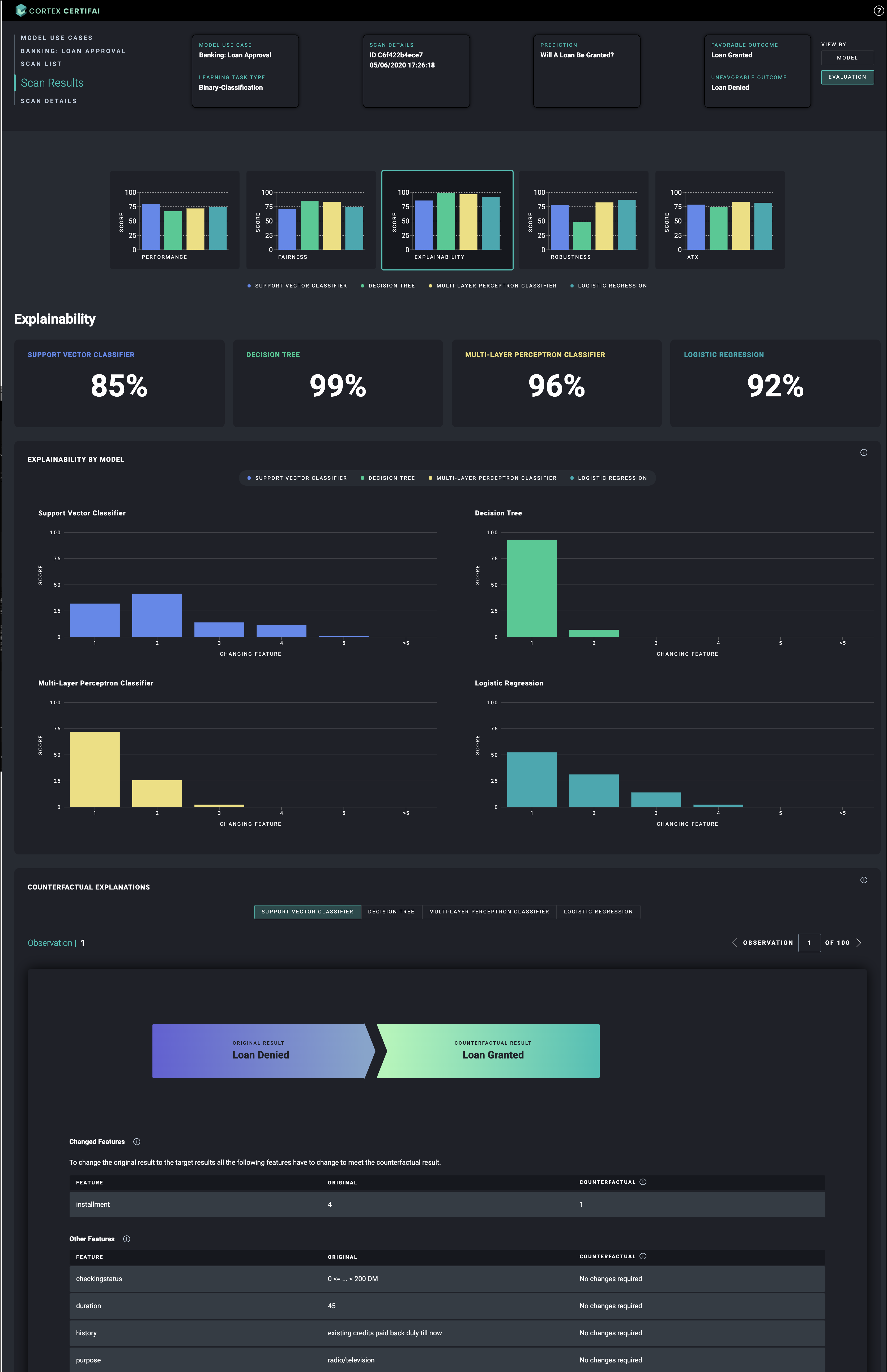

Explainability

The explanations feature for Multiclass Classification task type provides a toggle for viewing more or less favorable outcomes than the original. The toggle is only used for ordered multiclass classification use cases.

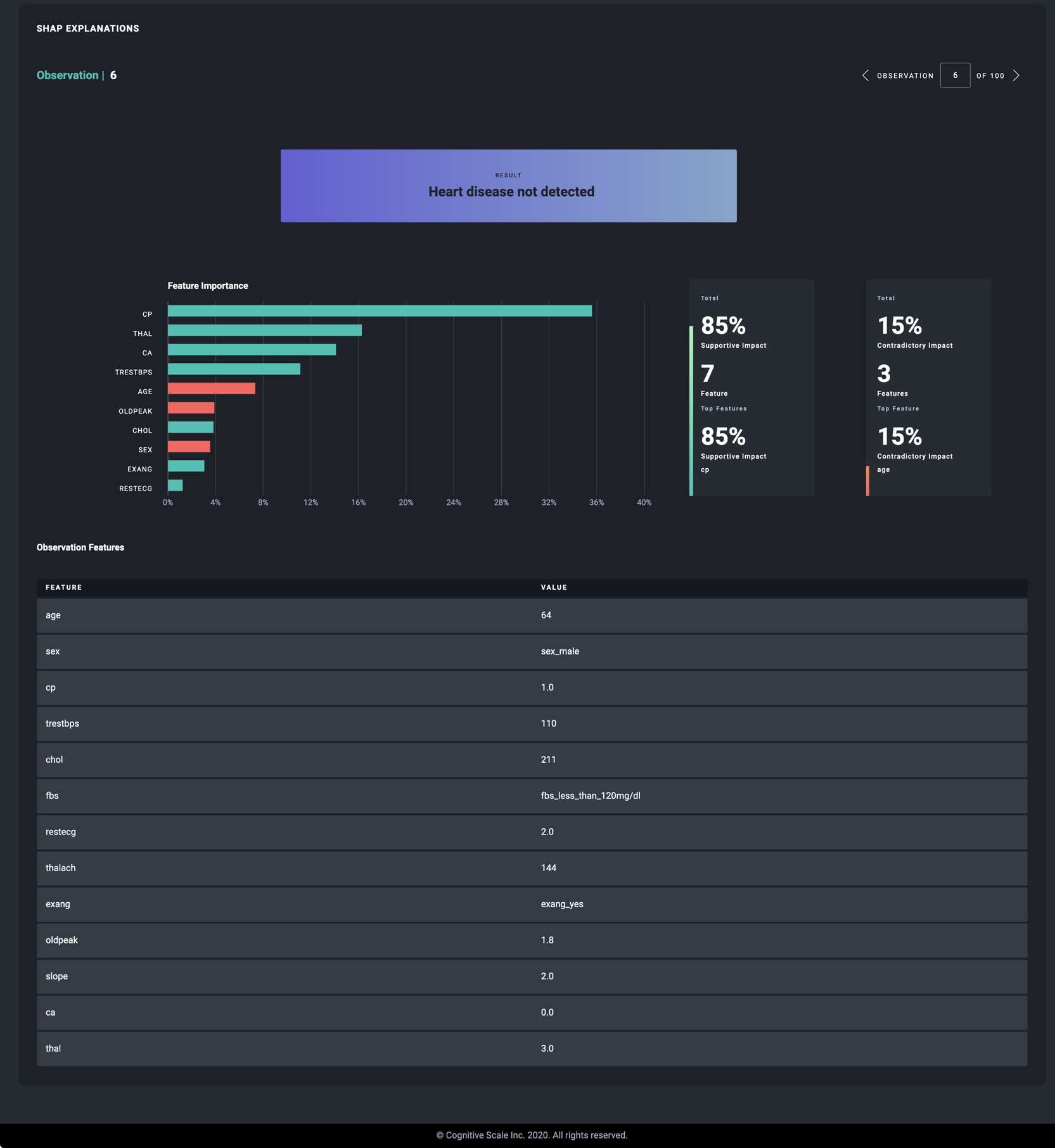

The explanations feature also offers an alternative display for SHAP results. Users can opt for SHAP explanations, counterfactual explanations, or both.

Use the SHAP notebook available in the cortex-certifai-examples repository to run a scan the generates SHAP results.

Robustness

ATX

Download counterfactuals

Enable the saving of counterfactuals for evaluations.

To configure saving counterfactuals, set the

save_counterfactualsvalue totruein theevaluationsection of thescan-definition.yamlfile.Run your scan and open the Console.

In Console find the use case and select

scan listfrom the vertical ellipsis menu.Find the scan and select

resultsfrom the menu at the end of the row.View by

modelis displayed by default. Click a model chart in the row of charts beneath the summary.If counterfactuals were configured to be saved, the Download button is displayed at the top right. Click the button.

Choose the report type you want the counterfactuals file for from the options displayed:

- Fairness

- Robustness

- Explainability (counterfactuals)

- Explainability (SHAP)

- Explanations (Counterfactuals)

- Explanations (SHAP)

- A .csv file is downloaded to your local drive.

NOTE

The Download button is not displayed if counterfactual saving has not been configured for the model evaluations.

Links

For more information about how to configure the scan definition to display the visualizations above, head here.

Cortex Certifai

Cortex Certifai